This month OGP’s Human factors Sub-Committee (HFSC) released a report on its website based on a study regarding the cognitive issues that are associated with safety and environmental incidents within the oil and gas industry.

Regarding safety issues it should be understood that engineering solutions alone may not be enough to prevent hazardous occurrences, no matter how well designed an engineering system may be. The role that human resources play in the operation of any safety-system is often critical and it requires significant support as well as knowledge and understanding of safety issues. OGP’s study direction is towards a better understanding of the psychological basis of human performance which is critical for safety systems performance, their operation as well as their future improvement.

The purpose of the study was to stress and analyze the important contribution that cognitive issues can have in safety and environmental incidents.

OGP’s report focuses on issues identified on an individual level and and on the interactions between individuals. According to the study psychological procedures which are often related to perception and risk assessment, associated reasoning and judgement and decision making can be further divided into the four following categories:

- Situation awareness

- Cognitive bias in decision-making

- Inter-personal behaviour

- Awareness and understanding of safety-critical human tasks

Of the above, the first three are related to non-technical human skills, while the fourth is associated with organisational preparedness for critical operations. The four categories have, in one way or another, a degree of psychological complexity to them.

Realization of the importance of the above themes when followed by appropriate management and safety systems, may help companies to increase their reliability as organisations and it can also lead to the development of a sense of mindfulness and state of chronic unease that can contribute to an improved ability to detect and respond to safety incidents.

1. Situation awareness

Loss of Situation Awareness (SA) can lead to the development of serious, safety related, incidents i.e. a failure to seek and make effective use of the information needed to maintain proper awareness of the state of the operation and the nature of the real-time risks. Such an operation might be the immobilization of the ship’s Main Engine in order to make repairs and/or maintenance where if all parameters that may affect the safe operation of the vessel are not taken into consideration a serious accident may occur, for example one may have not take into consideration weather related information and the vessel may find herself adrift without being able to start propulsion systems.

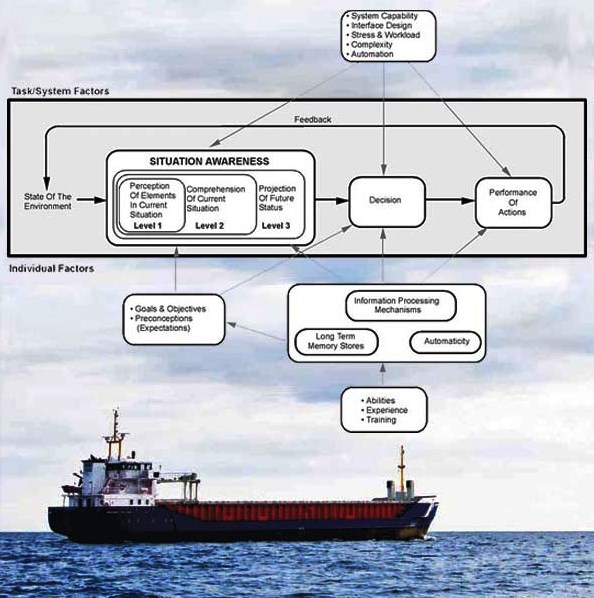

Endsley’s model of situation awareness. (Source: wikipedia)

This can be applied to both the awareness and prioritisation of risk, as well as to decision-making and assessment of an operation. Much of the leading work on SA has been carried out by the American psychologist Mica Endsley. In psychological terms, situation awareness is defined in terms of three related levels of cognition:

- Perception of information about what is happening in the world (Level 1). This is the perception of information available to the senses indicating what is happening in the world. According to studies 67% of the incidents included a failure in Level 1 situation awareness.

- Interpretation of what the information means in terms of the state of the world (Level 2). This is about interpreting what Level 1 information means in terms of the state of the operation. It means knowing whether valves are open or closed, whether pumps are running, or whether a vessel is filling or emptying based on the Level 1 information (symbols, colours, data) presented on an HMI. 20% of the incidents included failures at Level 2

- Projection of the likely status of the world in the immediate future (Level 3). This is about predicting the future state of the world based on what the operator believes is the current state. 13% of the incidents included failures at Level 3.

As with all other areas of human performance, the cognitive procedures involved in acquiring and maintaining situational awareness are complex and prone to error. Such failures are:

- Failure to attend to or appreciate the significance of “weak signals”. A “weak signal” is something which, in itself, may be relatively insignificant and does not justify actions that may be needed. Sometimes however, weak signals are early indications that something is indeed wrong. For example think of the Oil Content Meter readings from the Oily Water Separator. Old OWS equipment installed onboard may give faulty readings, small deviations from the expected measurements, which consequently may lead the engineering crew to believe that the readings are “more or less” normal and therefore they don’t give the appropriate attention to the equipment. Clearly, expecting from someone to take action every time he suspects something could be wrong, is impractical and would make most safety-critical operations impossible.

- Confirmation bias. The term “confirmation bias” refers to the psychological tendency to rationalise information to make it fit what we want to believe. Confirmation bias would lead to rationalize away information or data that are wrong, unclear or ambiguous, or which does not meet with what personnel involved in the operation believes (or want to believe) is the actual state of an operation. In this way ambiguous or problematic information can be rationalised in a way that allows an operation to proceed on the assumption that everything is really as expected, and in order. For example the faulty reading of the OWS mentioned above may be attributed to the OCM being old or needing calibration.

2. Cognitive bias in decision-making

Cognitive bias means the innate tendency for cognitive activity – ranging from the perception and mental interpretation of sensory information, through to judgement, and decision making – to be influenced or swayed, i.e. biased, by emotion or lack of rationality.

Cognitive bias is fundamental to human cognition and can have both negative and positive effects. Positive effects include enabling faster judgement and decision-making than would be possible if all the information available to thinking had to be processed equally. Negative effects can include a distorted interpretation of the state of the world, poor assessment of objective risk, and poor decisionmaking. Cognitive bias can take many different forms and can operate in many different ways.

According to the study, incident investigations in the oil & gas sector often contain indications of decisions being made in ways that strongly suggest the influence of cognitive bias. Among the most common include:

Response bias: The Theory of Signal Detection (TSD) may be used in order to explain the concept. The classic, conceptually simple, example of the use of TSD concerns radar operators who (before current generations of radar and display technologies were developed) had to make decisions about whether a target had been detected based on visual observation of a noisy radar display. The operators had to decide whether a patch of light on the radar screen is actually a “signal” indicating a target (e.g. a hostile aircraft or missile) in the world, or “noise”, such as electronic noise, or radar returns reflecting off water, rocks or the atmosphere. Similarly oil. gas and maritime applications are influenced by a lot of factors, including:

- the perceived costs of incurring a delay to an operation.

- whether the individual would be held personally to account if the intervention was unnecessary (or, conversely, whether they would be held to account for not intervening).

- how peers and colleagues would view the intervention .

- trust that the system is robust enough to handle a disturbance even if one does occur.

Response bias is also strongly influenced by what is known as “risk habituation”. People will tend to under-estimate (become habituated to) risk associated with tasks they perform regularly and that are usually completed without incident.

Risk framing and loss aversion: The term “loss aversion” refers to the bias towards favouring avoiding losses over acquiring gains. Most people would go to significantly more effort to avoid what they perceive as a loss, than they would to achieve a gain of the same value. Research has demonstrated that the desire to avoid a loss can be twice as powerful in motivating behaviour and decision making, as the desire to seek an equally valued gain. Because of the way it is framed, the perception of risk in the minds of the workforce can be fundamentally misaligned with the reality of the safety or environmental risks that are actually faced.

Commitment to a course of action: People find it extremely difficult to change from a course of action once committed to it. This is especially true when the commitment includes some external, public, manifestation. Internalised commitments that are never verbalised or shared with anyone else are much easier to break.Committing to a course of action does not simply mean deciding to pursue it, it means engaging at an emotional level. This emotional commitment brings psychological forces into play that can be very powerful in motivating not only how we behave, but how we see and understand what is happening in the world around us (i.e. our situation awareness).

Recent research by NASA and others has investigated the concept of “plan-continuation errors”. This work has looked at the tendency for pilots to continue with a plan even when conditions have changed, risks have increased, and they should really re-evaluate and change plan. One study has shown that, in a simulator, the closer pilots were to their destination, the less likely they were to change their course of action. Changing a plan requires effort and can be stressful.

Mental heuristics: At least two powerful heuristics may influence operational decision making;

- The availability heuristic: the tendency to predict the likelihood of an event based on how easily an example can be brought to mind.

- The representativeness heuristic: the bias people have towards giving priority to information that is readily available to them in judging the probability or likelihood of an event. The alternative, non-biased, approach is to actively look for information relating to all events of interest. The representativeness heuristic is common and extremely useful in everyday life. However, it can result in neglecting to adequately consider the likelihood of events for which information is not readily available

3. Inter-personal behaviour

Inter-personal behaviours are clearly extremely important when teams have to work together to ensure safety. This has been recognised and studied for many years, particularly in the aviation industry. Air crash investigators have concluded a number of times that inter-personal factors have interfered with sharing of information that could have avoided some high profile air accidents.

Some incident reports in the oil & gas sector include indications that inter-personal factors had contributed to individuals either not sharing and using information that was available to them, or not effectively challenging decisions that they believed were wrong.

Same applies of course to the maritime industry as well. Just think of a Chief Officer who is not getting along with the Master or of office personnel who is not following the instructions of the DPA.

4. Awareness and understanding of safety-critical human tasks

The notion of “safety-critical human tasks” (sometimes known as “HSE critical activities”) is widely recognised. A “safety-critical human task” is an activity that has to be performed by one or more people and that is relied on to develop, implement or maintain a safety barrier. The fact that these tasks rely on human performance is usually either because it inherently relies on human decision-making, or because it is not technically or practically possible to remove or automate it. In any event, no level of automation can avoid some level of human involvement in an operation, for example in maintenance and inspection, whether locally or remotely.

To sum up improved understanding and management of the cognitive issues that underline the risk assessment and safety-critical decision-making could make a significant contribution to further reducing the potential for the occurrence of safety related incidents. In line with the above a company should work towards adopting practices to identify and understand safety-critical human tasks as well as work on the operational and management practices that should be in place to ensure personnel is able to perform these tasks reliably. That means:

- avoidance of distractions;

- ensuring alertness (lack of fatigue);

- design to support performance of critical tasks in terms of use of automation,

- user interface design and equipment layout;

- increasing sensitivity to weak signals and

- providing a culture that rewards mindfulness when performing any safety critical activity.

There is significant potential for learning and improvement within the industry, starting with increased awareness of the psychological basis of human performance. Although the study analyzed above is mainly referring to the gas and oil industry, still there are many lessons to be learned and find their way to the maritime industry as well.

The OGP report can be found HERE.

For further reading related to the subject please refer to the following:

- Woods, D.D., Dekker, S., Cook, R., Johannesen, L. & Sarter, N (2010) ‘ Behind Human Error’ Ashgate

- Endsley, M. (2011) Designing for Situation Awareness: An approach to User-Centred Design, 2nd Edition, CRC Press

- Weick, K.E. and Sutcliffe, K.M. (2007) Managing the Unexpected. Resilient Performance in an Age of Uncertainty. 2nd Edition John Wiley

- Kahneman, D. (2011) “Thinking, Fast and Slow” Farrar, Strauss and Giroux

- Brafman, O. and Brafman, R (2008) “Sway: The Irresistible pull of irrational behaviour”. Doubleday.

- Flin, R.; O’Connor, P.; Crichton, (2008) ‘Safety at the sharp end’, Ashgate

- Columbia Accident Investigation Board, Volume 1 (2003)

- Sneddon, A., Mearns, K., & Flin, R. (2006) ‘Situation Awareness and Safety in Offshore Drill Crews’ Cogn Tech Work, 8 pp 255-267

- Klein, G. (1999) ‘Sources of Power’, MIT Press

- UK Health and Safety Executive (2003) ‘Factoring the human into safety: Translating research into practice. Crew Resources Management Training for Offshore Operations’ Volume 3. Research Report 061

- Vicente, K .(2004) ‘The Human Factor’ Routledge.

Thoughful article

Reblogged this on HealthandSafetyFirst.